By Stephen DeAngelis

Executive Summary

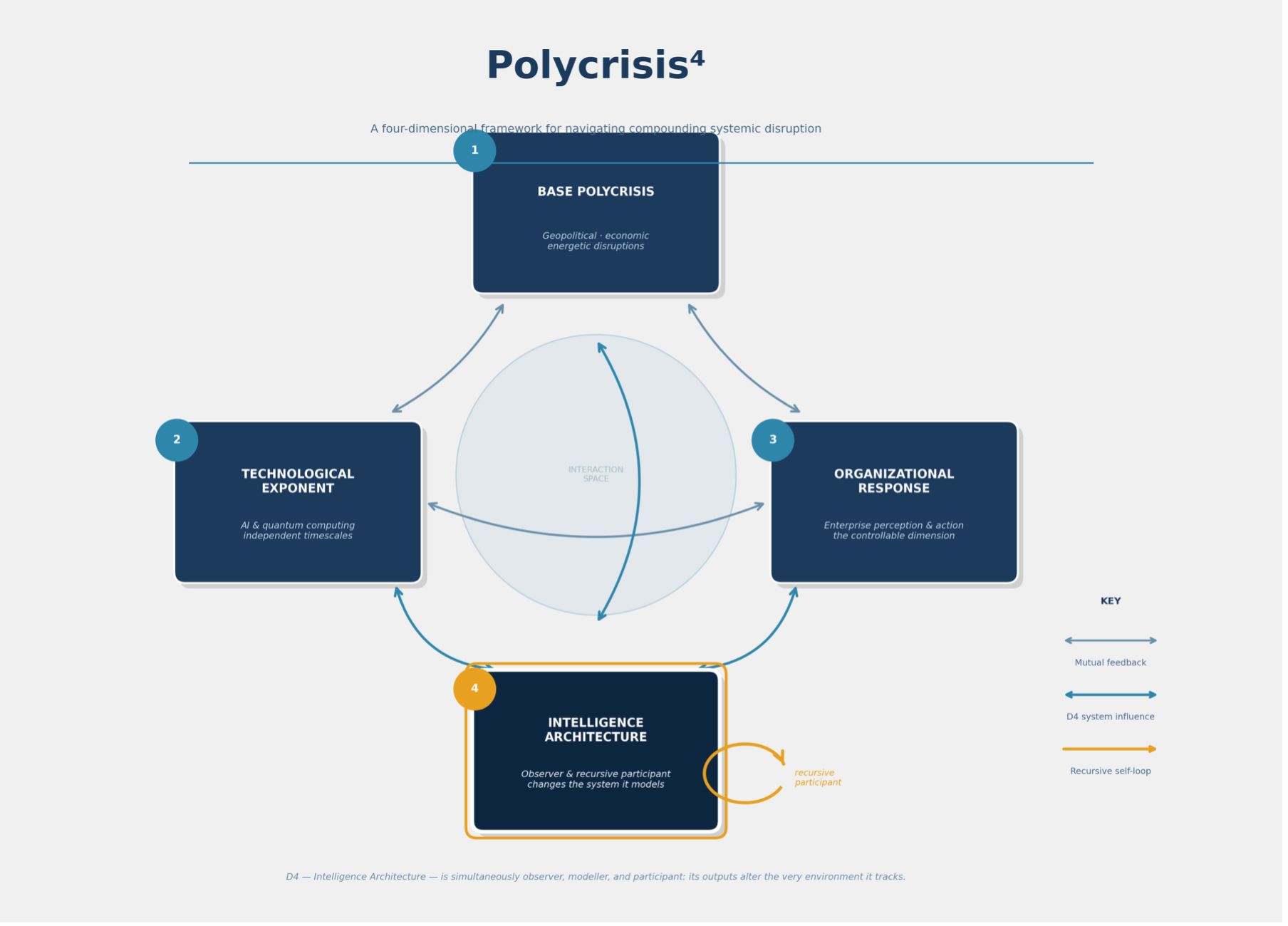

The previous brief in this series, Polycrisis³, argued that compounding crises, exponential technology, and the organizational response function form a three-dimensional problem. It concluded that the false resolution, the period of apparent calm following an acute crisis, is the most dangerous phase. It called for a continuously operating enterprise intelligence architecture as the answer. This brief asks the question that follows. What happens when that architecture is deployed?

The answer is that the architecture itself becomes a fourth participant in the system. When an enterprise embeds continuous AI-driven sensing and optimization into its operations, it does not simply observe the competitive environment. It changes it. Its supply chain decisions redirect material flows. Its pricing optimization reshapes market dynamics. Its risk sensing alters the information available to every other player. The architecture is not a telescope pointed at the crisis. It is a new body in the system.

This changes the nature of the game. The intelligence architecture senses the environment, acts on it, and by acting, changes it. Then it senses the changed environment. If the recursive loop is well-designed, each cycle improves accuracy and competitive position. If it is poorly designed, each cycle amplifies error. The difference between the two is not gradual. A companion computational illustration shows that it is sharp, with a clearly defined boundary separating architectures that converge toward stability from those that diverge toward catastrophe. Getting this right is not a question of incremental improvement. It is the defining architectural decision for any enterprise operating under persistent, compounding disruption.

From Three Dimensions to Four

In Polycrisis³, I described three interacting dimensions that define the current risk environment. The first is the base Polycrisis of geopolitical, economic, and energetic disruptions, the structural instabilities in trade, energy, and sovereign relationships that the Iran-US ceasefire paused but did not resolve. The second is the technological exponent of artificial intelligence and quantum computing, which operates on its own timeline and does not observe ceasefires or trade negotiations. BCG's research continues to show the top five percent of AI-mature companies achieving 1.7 times the revenue growth and 3.6 times the total shareholder return of lagging firms, a gap that widens with each investment cycle regardless of geopolitical conditions.1 The third is the organizational response function, the dimension most directly within leadership's control and most degraded by the false resolution dynamic.

That brief closed with a call for a specific kind of architecture, a continuously operating intelligence layer that senses across all timescales simultaneously, acts in real time, and learns from the outcomes of its own actions. I called the capacity to operate across near-term, medium-term, and long-term timescales at once Timescale Coherence. The Sense, Think, Act & Learn architecture was proposed as the mechanism for achieving it.2

What I did not address in Polycrisis³ is what happens to the system itself when that architecture goes live. I want to address it now, because the implications are significant and largely unexamined in the enterprise strategy conversation. Most discussions of enterprise AI treat technology as a tool that improves internal operations. Better forecasts, faster decisions, lower costs. That framing is accurate as far as it goes, but it misses the structural change that occurs when the architecture begins operating continuously in a competitive market.

When an organization deploys a continuously operating intelligence architecture, it is not simply adding a tool. It is introducing a new participant into a complex adaptive system. The architecture does not sit outside the environment and report on it. It operates inside the environment and changes it. This is the transition from Polycrisis³ to Polycrisis⁴. The superscript is not arbitrary.

The fourth dimension is the intelligence architecture itself, now active, now coupled to the three dimensions it was built to perceive.

This is an observable dynamic that is already playing out in competitive markets. And it changes the game for everyone.

The Architecture as Participant

The insight at the center of this brief can be stated in plain language. When you deploy continuous AI-driven sensing and optimization, your actions change the competitive environment for everyone else. George Soros documented this dynamic in financial markets, showing that participants' models of the market change the market itself.3 The Lucas critique established the same principle in macroeconomics, demonstrating that policy interventions based on statistical models invalidate the models.4 Heinz von Foerster formalized it in cybernetics through his concept of eigenforms, the stable patterns that emerge when a recursive process converges.5 But the practical implications for enterprise architecture have not been systematically examined. I want to walk through what happens in concrete terms.

Consider a large consumer products manufacturer that deploys an intelligence layer capable of continuous demand sensing, real-time price optimization, and dynamic supply allocation. Every decision that architecture generates ripples outward. When it detects a demand shift and reallocates inventory before competitors perceive the same shift, it captures margin that would otherwise have been distributed across the market. When it optimizes promotional pricing in real time, it changes the price signals that every other company's planning system ingests. When it senses a supplier risk and diversifies sourcing ahead of a disruption, it consumes available alternative capacity that competitors will need when they detect the same risk days or weeks later. When it identifies that a specific retailer's demand for a product category is about to spike based on weather patterns, promotional calendars, and local economic indicators, and it pre-positions inventory accordingly, it changes fill rates across the category for every manufacturer serving that retailer.

None of these effects are speculative. They are the natural consequence of operating faster and with better information than the prevailing market cycle. McKinsey's research has shown that ninety percent of supply chain leaders reported significant disruptions in 2024, with an average response time of two weeks.6 An intelligence architecture that can detect and respond within hours rather than weeks does not simply respond faster. It reshapes the environment that every two-week responder will eventually encounter. David Teece's work on dynamic capabilities describes how firms that can sense opportunities, seize them, and transform their resource base in response gain durable competitive advantage.7 What the fourth-body dynamic adds is the recognition that the sensing and seizing itself alters the environment that every firm is trying to sense and seize within.

Donald MacKenzie documented a parallel phenomenon in financial markets. He showed that the Black-Scholes options pricing model did not merely describe derivatives markets. It changed them. Traders who adopted the model altered their behavior in ways that made the model's predictions more accurate, until the conditions changed and the model's assumptions broke down catastrophically.8 The intelligence architecture creates a similar reflexive loop. It models the environment, acts on the model, and by acting, changes the environment the model was built to represent.

George Soros described the same dynamic in his theory of reflexivity. Market participants do not passively observe the market. Their observations inform their actions, and their actions change the market they were observing. The thinking participant and the situation in which it participates are not independent of each other.3 Soros was describing human traders. What changes in Polycrisis⁴ is that the architecture itself becomes the reflexive participant, operating continuously at a speed and scale that no human trading desk or planning team can match.

This is what I mean by architectural endogeneity, the condition in which the intelligence system and its environment are recursively coupled.9 The architecture does not observe from outside. It operates from within.

The Recursive Loop

The practical consequence of architectural endogeneity is a recursive feedback loop that either stabilizes or destabilizes the organization that deploys it.

The loop works as follows. The architecture senses the environment and builds a model of current conditions. It generates decisions based on that model. Those decisions change the environment. The architecture then senses the changed environment and updates its model. If the update process is well-designed, each cycle reduces the gap between the model and reality. The model gets more accurate. The decisions get better. The competitive position compounds. Heinz von Foerster, one of the founders of second-order cybernetics, described a related concept that he called eigenform, a stable pattern that emerges when a recursive process converges.5 The well-designed intelligence architecture converges toward its own eigenform, a self-consistent model of the environment that accounts for the architecture's own effects on that environment.

If the update process is poorly designed, the opposite happens. Each cycle introduces errors that feed into the next cycle’s sensing. The model drifts further from reality. The decisions degrade. And the degradation is invisible to the organization because the architecture is reporting on an environment that its own actions have already distorted. I have watched versions of this failure mode in organizations that deployed optimization tools without closing the feedback loop. The tool generates a recommendation. The team acts on it. The outcome changes the conditions. But the tool does not observe the outcome, so its next recommendation is based on conditions that no longer exist. In a stable environment, this error is tolerable. In a Polycrisis⁴ environment, where the conditions are already shifting under the influence of multiple interacting forces, the error compounds.

This is the difference between a stabilizing and a destabilizing architecture, and it matters more than any single technology decision a company will make in the next five years.

The concept has a formal analog in mathematics. A contraction mapping is a function that, when applied repeatedly, converges to a fixed point. Each iteration brings the output closer to a stable answer. The opposite, an expansive mapping, diverges with each iteration. The difference between the two is determined by a single parameter, whether the contraction factor is below or above one.10 Below one, the system converges. Above one, it diverges. At the boundary, the system is neutrally stable, neither improving nor degrading, but vulnerable to any perturbation that pushes it to one side or the other.

I want to be direct about what this means in operational language. Every organization that deploys a recursive intelligence architecture is implicitly making a bet about which side of that boundary it operates on. The organizations that ensure their architectures are contractive, that each learning cycle reduces error rather than amplifying it, will compound their advantage over time. The organizations that do not discover the problem only after the divergence has become severe, because a diverging architecture feels productive until the errors become large enough to be visible, and by then the correction cost is enormous.

What Good Architecture Requires

If the recursive loop is the mechanism, the natural question follows. What design requirements ensure that the loop converges rather than diverges? I have identified four that I believe are necessary conditions. Whether they are jointly sufficient is an open question that I am actively researching.9

Recursive self-correction

The architecture must update its own model in response to the outcomes of its own decisions. This is the contraction mapping property applied to organizational learning.10 Each decision cycle must produce not only an action but also a measured outcome that feeds back into the model's parameters. The learning rate, the speed and fidelity with which the model incorporates new information, is the practical equivalent of the contraction factor. Too slow, and the model fails to track a changing environment. Too fast, and the model overreacts to noise, mistaking random variation for structural change. The recursive self-correction requirement is what separates an intelligence architecture from a static decision-support tool. A tool gives you an answer. An architecture gives you an answer, measures how good that answer was, and uses the measurement to give you a better answer next time.

In concrete terms, consider a demand sensing model that predicts a promotional lift of fifteen percent for a specific product in a specific region. The promotion runs. Actual lift is nine percent. A static tool does not incorporate that error. The next time a similar promotion runs, it will predict fifteen percent again. A recursive architecture incorporates the six-point error, adjusts the model's sensitivity to that type of promotion in that region, and produces a more accurate prediction next time. Multiply that correction across thousands of products, hundreds of regions, continuous promotional activity, and the recursive advantage becomes structural.

Anticipation

The architecture must contain a model of its own future states, not merely a model of the current environment. Robert Rosen's work on anticipatory systems established this requirement formally. A system that can only react to present conditions will always be outpaced by a system that can represent and act on anticipated future conditions.11 In practical terms, this means the intelligence layer must run forward-looking scenarios continuously, not as a quarterly exercise but as an embedded function. When a supply chain architecture can simulate the downstream effects of a tariff change before the tariff takes effect, it operates anticipatorily. When it can only detect and respond to the tariff's impact after the fact, it operates reactively. The distinction compounds over every crisis cycle.

Requisite variety

The architecture must be able to generate as many distinct responses as the environment can generate distinct disturbances. This is W. Ross Ashby's Law of Requisite Variety, first articulated in 1956. Only variety can absorb variety.12 A planning system that produces one forecast per month has limited variety. A continuously operating intelligence architecture that can adjust sourcing, pricing, production, and logistics independently and concurrently has high variety. The reason this matters in the Polycrisis⁴ context is that compounding crises generate combinatorial variety. A tariff change that coincides with a logistics disruption that coincides with a demand shift produces an exponentially larger space of possible states than any one of those disruptions alone. If the architecture cannot match that variety, it will default to a simplified model, and the simplification will be the source of the errors that the recursive loop amplifies.

Concurrent multi-timescale processing

The architecture must operate across near-term, medium-term, and long-term timescales simultaneously, which is the Timescale Coherence concept I introduced in Polycrisis³.2 A supply disruption that will resolve in two weeks, a tariff regime that will reshape trade flows over two years, and a quantum computing timeline that will determine cryptographic security over two decades are all active simultaneously. The architecture must perceive and act on all three without allowing the urgency of the near-term to crowd out the importance of the long-term. This is the requirement that sequential planning cascades structurally cannot meet. A monthly Sales and Operations Planning cycle that runs forecasting, then supply planning, then financial reconciliation in sequence can represent one timescale at a time. It cannot represent the interaction between timescales, which is where the compounding risk lives. Only two in five companies consider their S&OP process effective, a statistic from 2015 research that subsequent industry surveys have not materially improved upon.13 The reason is structural, not operational. The sequential cascade was designed for a world in which each timescale could be addressed independently. That world no longer exists.

These four requirements are demanding. They require a different kind of intelligence platform than the point solutions and isolated AI pilots that most organizations have deployed. BCG's Build for the Future 2025 study found that sixty percent of companies qualify as laggards and report minimal revenue and cost gains from AI despite substantial investment.14 McKinsey's 2025 State of AI survey reached a compatible conclusion from a different angle, reporting that nearly two-thirds of organizations have not yet begun scaling AI across the enterprise and that the majority remain in experimenting or piloting stages.15 But the four requirements follow directly from the nature of the problem. If the environment is non-stationary, the architecture must anticipate. If the environment generates combinatorial variety, the architecture must match it. If the architecture's own actions change the environment, it must correct itself recursively. If the disruptions operate on multiple timescales simultaneously, the architecture must process them concurrently. Each requirement addresses a specific structural feature of the Polycrisis⁴ condition.

The recursive loop has a vulnerability that must be stated plainly. Every cycle of sensing, modeling, and acting depends on the quality of the incoming data. If the sensing layer ingests corrupted, delayed, or AI-contaminated information, the recursive mechanism amplifies the error rather than correcting it. Data integrity is not a supporting function of the architecture. It is a precondition for the architecture's stability.

The architecture does not replace human judgment. It restructures the role of human decision-makers from producers of plans to evaluators of options that the architecture generates continuously. This requires a different organizational design and a different talent profile than monthly S&OP cycles demand. The organizations that treat the intelligence architecture as a technology deployment without redesigning the human roles around it will not achieve the recursive stability the architecture is designed to produce.

A Computational Illustration

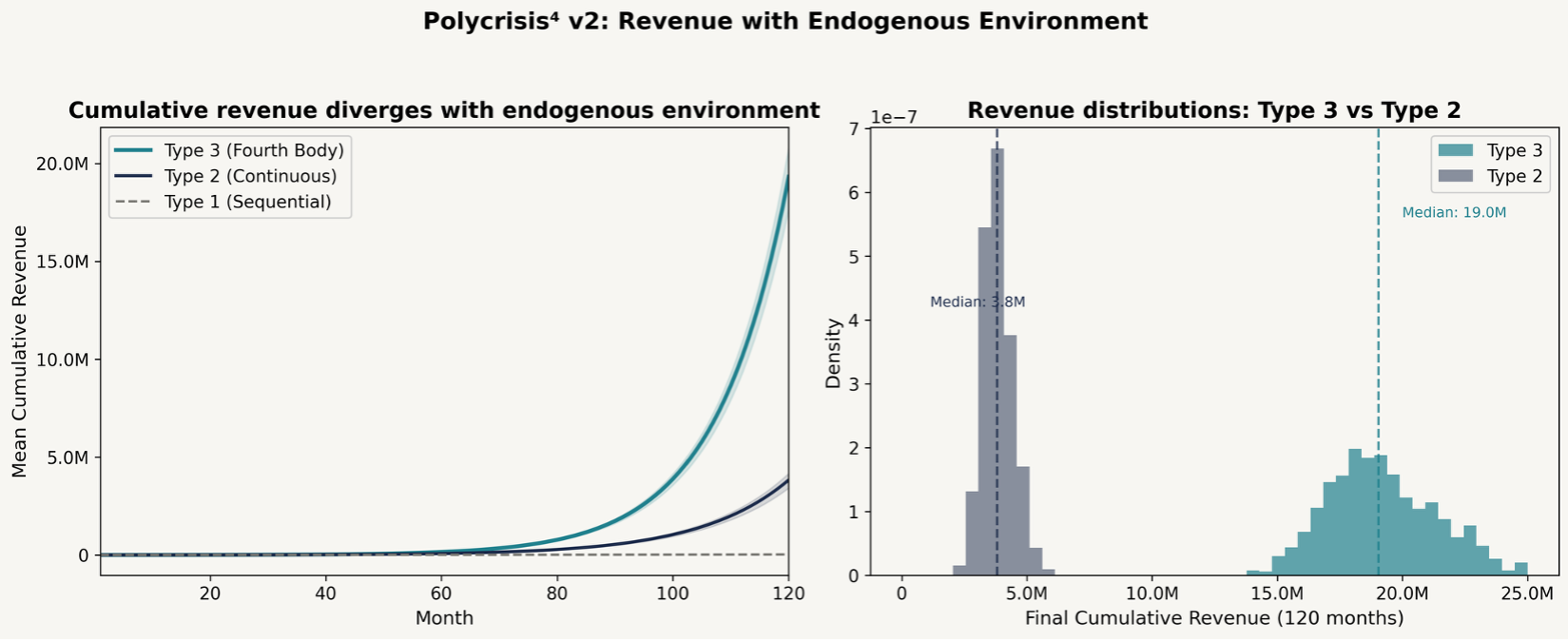

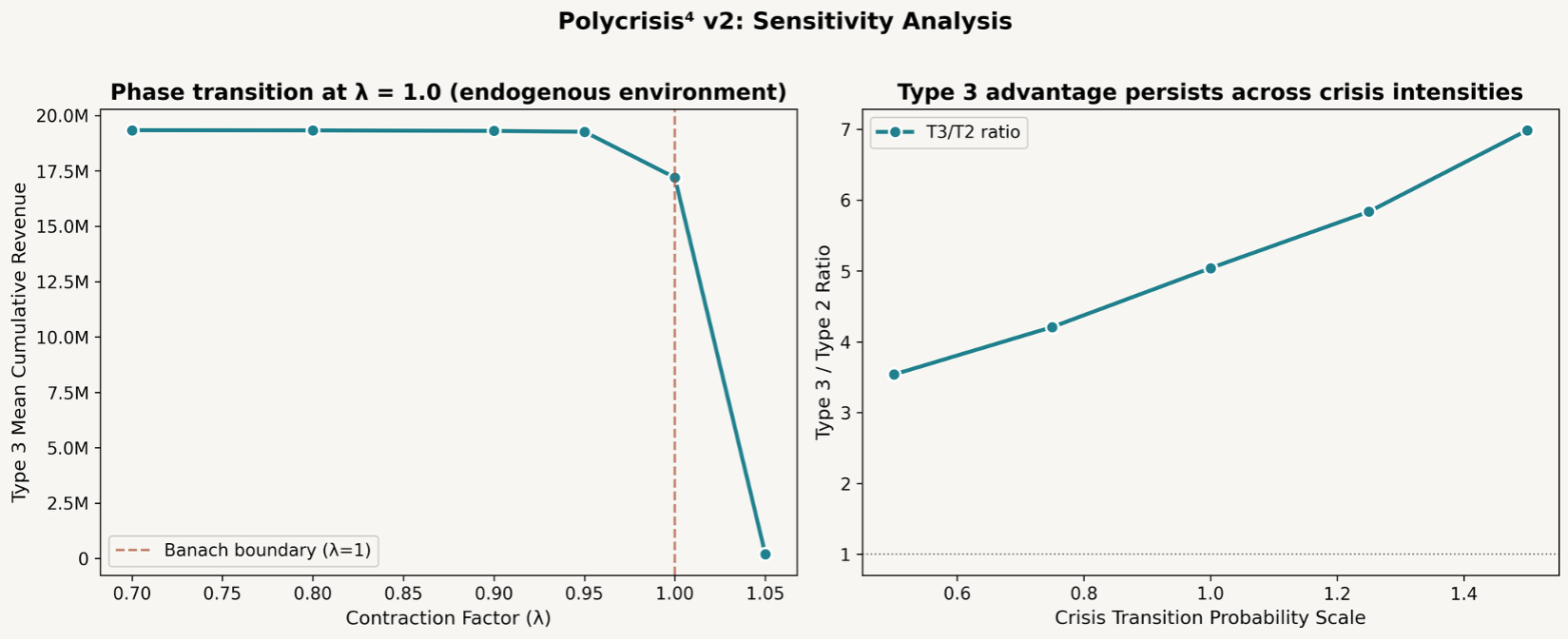

To explore whether the framework's logic is internally consistent, I developed a stylized computational model. The model has not been independently validated, and the results should be understood as an illustration of the framework's dynamics, not as empirical evidence for them. The value of the exercise is pedagogical. It shows what the framework predicts in a simplified environment.

The simulation code was generated by AI and has not been independently reviewed or validated against empirical data. The specific magnitudes (approximately five-fold advantage, 1.62 times recursive contribution) are properties of the model's parameter choices, not findings about real-world performance.9 Independent replication and empirical calibration are necessary before any quantitative conclusions can be drawn.

The simulation models three types of enterprises operating in a shared environment with stochastic crisis states. Type 1 enterprises use sequential planning with monthly update cycles. Type 2 enterprises use continuous sensing with real-time response. Type 3 enterprises add the recursive self-correction loop. The architecture updates its own model based on the outcomes of its own decisions, and those decisions feed back into the competitive environment. In the endogenous version of the simulation, Type 3 enterprises' actions change the crisis transition probabilities for everyone, making the environment more turbulent for enterprises that lack the recursive capability.

Over 1,000 simulation runs across 120 months, the model illustrates that the recursive architecture consistently outperforms.9 The advantage is directionally consistent across every single run, across five different random seeds, and across a range of crisis volatility levels.

Three findings deserve attention.

First, the advantage compounds over time. The gap between recursive and non-recursive architectures widens with each crisis cycle. This is consistent with the contraction mapping prediction. Each cycle of recursive self-correction improves model accuracy, which improves decision quality, which compounds through subsequent cycles. In more volatile environments, the advantage grows larger, because there are more crisis cycles through which the compounding can operate. Type 3 revenue remains remarkably stable across crisis intensity levels, while Type 2 revenue drops significantly as volatility rises. The model illustrates that the recursive architecture earns its greatest value when conditions are most turbulent.9

Second, the recursive mechanism provides independent value. Ablation studies, which systematically remove each advantage one at a time, illustrate that even when the recursive architecture has the same sensing quality, the same response speed, and no special crisis-phase bonuses as the continuous-sensing architecture, the recursive self-correction loop alone still produces a meaningful performance advantage of 1.62 times. The recursive mechanism is responsible for approximately 15 percent of the total architectural advantage, with faster response contributing about 45 percent and crisis-phase positioning contributing about 38 percent (simulation code and parameters are documented in the companion Polycrisis⁴ Research Agenda and are available upon request for independent verification).9 I want to be transparent about what this means. The recursive self-modeling is a real and isolable contributor, but it is not the dominant one. Operational speed and the ability to capitalize on crisis windows contribute more. The recursive loop may be most valuable as the mechanism that enables those operational capabilities, rather than as an independent performance driver.

Third, and most striking, is the phase transition at the convergence boundary. When the contraction factor is below one, the system converges and performs well. Revenue varies by less than half a percent across the entire convergent range. When the contraction factor crosses one, the system collapses. Model error does not degrade gradually. It explodes. Revenue falls roughly 95-fold from the convergent regime to a level worse than enterprises that never deployed a recursive architecture at all.9

This is the simulation's most important architectural insight. The difference between a well-designed and a poorly designed recursive architecture is not incremental. It is categorical. A recursive loop that corrects itself with each cycle builds compounding advantage. A recursive loop that amplifies error with each cycle produces compounding destruction. And the boundary between the two is a knife edge, not a gentle slope.

The simulation also reveals a practical design constraint. The recursive architecture's advantage disappears when implementation costs exceed approximately 1.6 percent of monthly revenue.9 Above that threshold, the overhead of maintaining the continuous sensing loop destroys more value than the recursive self-correction creates. This means the architecture must be implemented efficiently. The investment is justified, but it is limited, and organizations that over-engineer their sensing infrastructure may find themselves on the wrong side of the cost boundary.

I want to emphasize the appropriate frame for this illustration. The simulation is a stylized Monte Carlo model with judgment-based parameters. The absolute magnitudes are artifacts of compound growth over a 10-year horizon. The directional patterns, the compounding advantage, the independent contribution of the recursive mechanism, and the sharp phase transition, are the meaningful signals. They are consistent with the convergence hypothesis built into the framework, but they illustrate it rather than prove it.9

A Useful Analogy

There is a reason the Polycrisis framework has been using superscript notation, and it connects to a problem in physics that is worth a brief mention. In Newtonian mechanics, the two-body problem has a clean analytical solution. You can predict the motion of two gravitational bodies indefinitely. The three-body problem does not. Adding a third body introduces chaotic dynamics that make long-term prediction impossible in general. Adding a fourth body does not merely add complexity. It changes the qualitative character of the system again, because the fourth body interacts with all three existing bodies simultaneously, creating new feedback loops that did not exist in the three-body configuration.16 The enterprise intelligence architecture is, in this heuristic sense, a fourth body. It interacts with all three crisis dimensions at once, and its presence changes the dynamics of the entire system, for its own organization and for everyone else's. The analogy should not be pushed too far. Enterprises are not point masses. Competitive systems do not obey conservation laws. But as a teaching device for why adding the intelligence architecture changes the nature of the problem rather than merely its difficulty, the four-body parallel is instructive.

The Imperative

I have spent twenty-five years working with organizations in volatile environments, from military supply chains to global consumer goods to pharmaceutical manufacturing. I have watched the cycle of crisis and false resolution repeat across every sector. The pattern is consistent. The organizations that survive and compound their position are never the ones that responded most heroically in the acute phase. They are the ones that maintained their sensing and learning architectures through the quiet periods, when doing so was hardest to justify. I described that pattern in Polycrisis³, and the argument remains unchanged.2 What Polycrisis⁴ adds is the recognition that the architecture is a participant, not just a tool, and that its deployment creates a new competitive reality for everyone in the market.

What is different now is the fourth body. The organizations deploying recursive intelligence architectures today are not simply gaining efficiency or improving their forecasts. They are changing the competitive terrain for everyone. When an enterprise deploys continuous demand sensing and dynamic supply optimization, it does not just improve its own fill rates. It consumes market capacity, captures margin, and generates price signals that reshape the environment its competitors must operate in. That is the architectural endogeneity in action. The fourth body does not wait for the next crisis. It operates during the false resolution when most organizations have stood down their sensing layers. And with each cycle of recursive self-correction, the gap between the organizations that have deployed and those that have not grows wider and harder to close.

This is a structural commitment, not a technology procurement decision. The intelligence architecture must be designed to converge, not diverge. It must anticipate, match the variety of the environment, correct itself recursively, and process across multiple timescales concurrently. Those are demanding requirements. They require sustained investment, institutional discipline, and architectural thinking that most organizations have not yet undertaken.

I want to connect this back to the self-liquidating proof point model I introduced in Polycrisis³.2 The entry point remains the same. One high-value process, one instrumented intelligence layer, one fiscal year to demonstrate measurable return. But the framing is different. In Polycrisis³, the proof point was about building resilience. In Polycrisis⁴, it is about entering the recursive loop on the right side of the convergence boundary. The proof point is the first iteration of the recursive cycle. If it is designed to learn from its own outcomes, it sets the architecture on a convergent path. If it is designed as a static optimization, it misses the fourth-body dynamic entirely.

The window for making that commitment is the false resolution itself, the period of apparent calm when the argument for investment is hardest to make and the cost of inaction is hardest to see. Under the Budget Lab at Yale's baseline analysis, the Section 122 bridge tariffs are scheduled to expire in late July 202617. The AI competitive gap compounds over time.1 The organizations that use this window will compound their advantage through the next crisis cycle and the one after that. The organizations that wait for the next acute phase to make the decision will find that their competitors have already changed the terrain.

Each crisis cycle that passes without the commitment narrows the window further, because the organizations that have already deployed are feeding their advantages back into the environment, making recovery harder for those that have not. This is the endogenous dynamic in practice. The fourth body does not wait. It operates continuously, through every phase of the crisis cycle, including the false resolution. And with each cycle of recursive self-correction, the gap between those who have deployed and those who have not become harder to close.

The fourth body has entered the game. The question for every leadership team is whether it will be their fourth body, or someone else’s.

The intelligence architecture is no longer a tool that observes the crisis. It is a participant that changes it. The organizations that design their fourth body to learn from every cycle will compound advantage. The organizations that do not will compound exposure. The boundary between the two is sharp. The time to choose a side is now.

Stephen F. DeAngelis

Princeton, NJ

April 2026

Polycrisis²™, Polycrisis³™, Polycrisis⁴™, and Timescale Coherence™ are trademarks of Stephen F. DeAngelis. Sense, Think, Act & Learn™ is a trademark of Enterra Solutions.

About the Author

Stephen F. DeAngelis is the founder, president, and CEO of Enterra Solutions and Massive Dynamics, two companies that apply artificial intelligence and advanced mathematics to complex enterprise challenges. His career spans international relations, national security, and commercial technology. He has served in visiting research affiliations with Princeton University, the Oak Ridge National Laboratory, the Software Engineering Institute at Carnegie Mellon University, and the MIT Computer Science and Artificial Intelligence Laboratory. He is a founding member of the Forbes Technology Council. DeAngelis holds patents in autonomous decision science and has been recognized by Forbes as a Top Influencer in Big Data and by Esquire magazine as the 'Innovator' in its Best and Brightest issue.

About the Stephen DeAngelis Explainer Brief Series

The Stephen DeAngelis Explainer Brief series applies critical reasoning to the complex issues facing society today. In an era of compounding uncertainty and deepening division, the series aims to build understanding and community by making consequential topics accessible through rigorous analysis, current evidence, and honest assessment. Each installment is written in the belief that clarity of thought is itself a form of leadership.

Readers who wish to explore the proof-point entry model for their organization are welcome to reach out at www.deangelisreview.com.

Notes

1. Boston Consulting Group, "AI Leaders Outpace Laggards with Double the Revenue Growth and 40% More Cost Savings," press release, September 30, 2025. The specific finding is that compared to laggards, future-built companies achieve 1.7 times revenue growth, 3.6 times three-year total shareholder return, and 1.6 times EBIT margin. Research is based on BCG's Build for the Future 2025 Global Study of 1,250 senior executives and AI decision makers across nine industries. https://www.bcg.com/press/30september2025-ai-leaders-outpace-laggards-revenue-growth-cost-savings

2. DeAngelis, Stephen F., "Polycrisis³: The Third Dimension: When the Crisis Compounds, the Architecture Decides," A Stephen DeAngelis Explainer Brief, No. 2, DeAngelisReview, April 2026. www.deangelisreview.com

3. Soros, George, "Fallibility, Reflexivity, and the Human Uncertainty Principle," Journal of Economic Methodology, Vol. 20, No. 4, 2013, pp. 309-329.

4. The Lucas critique in macroeconomics established that when economic agents change their behavior in response to a new policy, the statistical relationships the policy was based on breakdown. The same structural logic applies to enterprise intelligence architectures that change the competitive environment they were designed to model. See: Wikipedia, "Lucas critique." https://en.wikipedia.org/wiki/Lucas_critique

5. Von Foerster, Heinz, "Objects: Tokens for (Eigen-)Behaviors," ASC Cybernetics Forum, Vol. 8, Nos. 3-4, 1976, pp. 91-96. Originally presented at the University of Geneva on June 29, 1976, on the occasion of Jean Piaget's 80th birthday, and reprinted as a chapter in Von Foerster, Heinz, Understanding Understanding: Essays on Cybernetics and Cognition, Springer, New York, 2003. This is the paper in which Von Foerster introduces the eigenform concept, the stable pattern that emerges when a recursive process converges and that grounds the architectural endogeneity argument in this brief. https://cepa.info/fulltexts/1270.pdf

6. Alicke, Knut and Tacy Foster, with Vera Trautwein, "The Way Forward: McKinsey Global Supply Chain Leader Survey," McKinsey & Company, October 14, 2024. The article reports findings from the fifth annual McKinsey Global Supply Chain Leader Survey. Nine in ten respondents reported encountering supply chain challenges in 2024. On average, companies take two weeks to plan and execute a response to a disruption. https://www.mckinsey.com/capabilities/operations/our-insights/supply-chain-risk-survey-2024

7. Teece, David J., Gary Pisano, and Amy Shuen, "Dynamic Capabilities and Strategic Management," Strategic Management Journal, Vol. 18, No. 7, 1997, pp. 509-533. See also Teece, David J., "Explicating Dynamic Capabilities: The Nature and Microfoundations of (Sustainable) Enterprise Performance," Strategic Management Journal, Vol. 28, No. 13, 2007, pp. 1319-1350.

8. MacKenzie, Donald, "Is Economics Performative? Option Theory and the Construction of Derivatives Markets," Journal of the History of Economic Thought, Vol. 28, No. 1, 2006, pp. 29-55.

9. DeAngelis, Stephen F., Polycrisis⁴ Research Agenda, April 2026. Simulation methodology, ablation results, robustness analysis, and full source code available upon request. See also the accompanying simulation results document. Working paper, available from the author upon request at www.deangelisreview.com.

10. The Banach fixed-point theorem establishes that a contraction mapping on a complete metric space has a unique fixed point, and that iterative application of the mapping converges to that point. See: Wikipedia, "Banach fixed-point theorem." https://en.wikipedia.org/wiki/Banach_fixed-point_theorem

11. Rosen, Robert, Anticipatory Systems: Philosophical, Mathematical, and Methodological Foundations, Pergamon Press, 1985. Second edition, Springer, 2012.

12. Ashby, W. Ross, An Introduction to Cybernetics, Chapman & Hall, London, 1956. The Law of Requisite Variety states that a controller must have at least as many available responses as the system it controls has possible disturbances.

13. Cecere, Lora, "Why Is Sales and Operations Planning So Hard?", Forbes, January 21, 2015. The two-in-five effectiveness figure is from this 2015 research. https://www.forbes.com/sites/loracecere/2015/01/21/why-is-sales-and-operations-plannning-so-hard/

14. Apotheker, Jessica, Vinciane Beauchene, Nicolas de Bellefonds, Patrick Forth, Marc Roman Franke, Michael Grebe, Nina Kataeva, Santeri Kirvelä, Djon Kleine, Romain de Laubier, Vladimir Lukic, Amanda Luther, Mary Martin, Jeff Walters, and Christoph Schweizer, "The Widening AI Value Gap: Build for the Future 2025," Boston Consulting Group, September 2025. The report finds that 60 percent of companies are reaping hardly any material value from AI, reporting minimal revenue and cost gains despite substantial investment, while 5 percent qualify as "future-built" AI leaders. Based on BCG's Build for the Future 2025 Global Study (n = 1,250). Web page: https://www.bcg.com/publications/2025/are-you-generating-value-from-ai-the-widening-gap. Full PDF: https://media-publications.bcg.com/The-Widening-AI-Value-Gap-Sept-2025.pdf

15. Singla, Alex, Alexander Sukharevsky, Lareina Yee, and Michael Chui, with Bryce Hall and Tara Balakrishnan, "The State of AI: Global Survey 2025," QuantumBlack, AI by McKinsey, McKinsey & Company, November 5, 2025. Nearly two-thirds of respondents say their organizations have not yet begun scaling AI across the enterprise, with the majority remaining in experimenting or piloting stages. https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai

16. Leigh, Nathan W.C., Nicholas C. Stone, Aaron M. Geller, Michael M. Shara, Harsha Muddu, Diana Solano-Oropeza, and Yancey Thomas, "The chaotic four-body problem in Newtonian gravity, I. Identical point-particles," Monthly Notices of the Royal Astronomical Society, Vol. 463, No. 3, December 2016, pp. 3311-3325. DOI: 10.1093/mnras/stw2178

17. The Budget Lab at Yale, "State of U.S. Tariffs: April 8, 2026," April 8, 2026. The Budget Lab's baseline analysis assumes the Section 122 tariffs expire in 150 days from enactment, placing expiration in late July 2026. https://budgetlab.yale.edu/research/state-us-tariffs-april-8-2026